Altos, a subsidiary of Acer, has launched a ‘Make in India’ AI server portfolio to reinforce India’s digital backbone and advance its sovereign AI ambitions. By manufacturing these servers locally, the company accelerates deployment timelines, enhances scalability, and broadens access to high-performance computing for enterprises, government agencies, and research institutions. At the center of this portfolio stands the BrainSphere R300 AI server, purpose-built to handle intensive AI workloads through advanced GPU integration and a highly efficient architecture.

This initiative aligns directly with India’s push for domestic manufacturing; moreover, it strengthens the national AI ecosystem by fusing global engineering expertise with localized production. As a result, it not only drives innovation but also enables large-scale AI adoption across critical sectors.

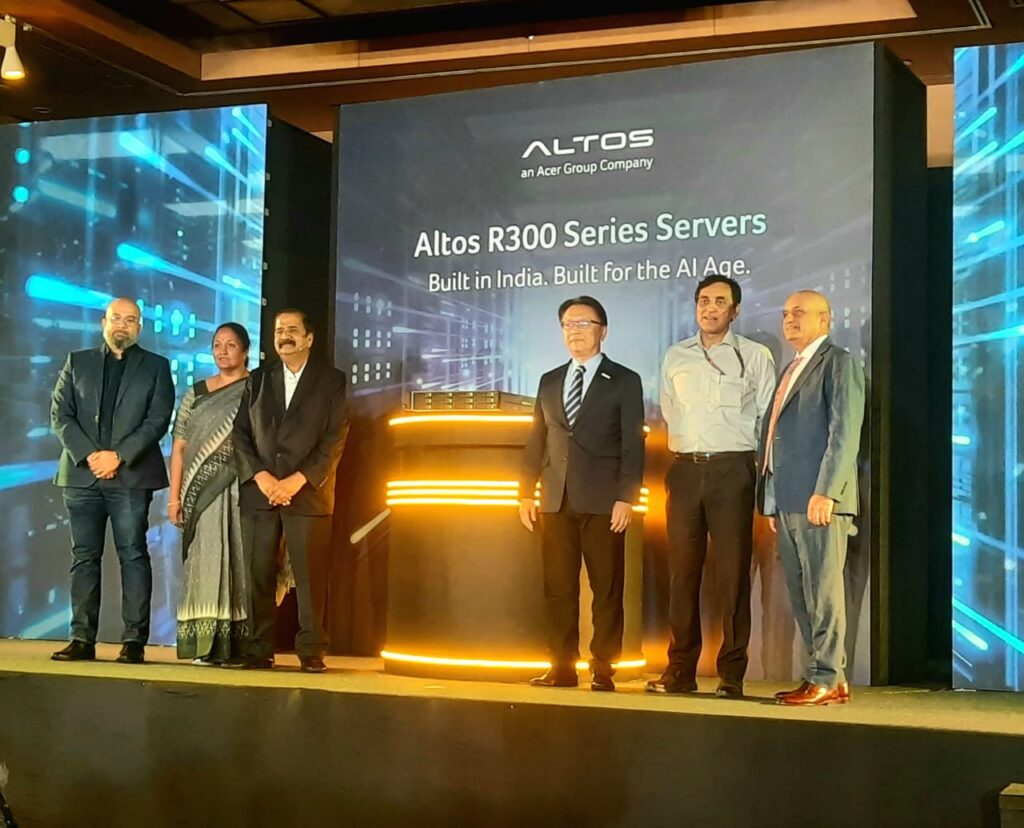

In an exclusive interaction with The Interview World on the sidelines of the Altos AI Server Launch program, Jackie CC Lee, CEO of Altos Computing Inc., articulates the strategic intent behind the launch. He further details the sector-specific value of the AI servers, evaluates the current trajectory of India’s AI landscape, and highlights Altos’ collaborations with the Government of India and domestic enterprises under the Make in India framework. Finally, he offers a forward-looking perspective on how AI will redefine outcomes for both individuals and organizations. The following are the key insights from this discussion.

Q: Could you provide detailed insights into the AI server launch, and the strategic objectives it aims to achieve?

A: We are introducing a high-end AI server built on the NVIDIA B200, powered by the Blackwell B200 GPU. It features an air-cooled design; therefore, it efficiently supports demanding AI model training workloads while also handling AI inference with equal effectiveness.

In addition, we are rolling out a scalable architecture that includes 4-GPU and 8-GPU PCIe configurations. These systems support a wide range of accelerators, including the H200, Pro 6000, Blackwell GPUs, and the L40S. Together, these platforms represent our flagship offerings, which we are now bringing to the Indian market.

At the same time, we are expanding the portfolio with mid-range solutions. These include a 2U, dual-GPU form factor system, as well as a compact AI workstation based on NVIDIA’s Digital Spark design. We have already commercialized these products in Taiwan; accordingly, we are now formally introducing them to the Indian market this week.

Q: Which industries or application domains is this AI server designed to support most effectively?

A: In the government sector, these systems play a critical role in advancing sovereign AI initiatives. They deliver the massive computational power required for national-scale AI programs; accordingly, we have already established collaborations with local cloud service providers (CSPs) to support AI mission projects.

At the enterprise level, the need shifts toward scalability and cost efficiency. Most organizations are still at the early stages of their AI journey; therefore, they prefer to avoid large upfront investments in high-end GPU infrastructure. Instead, they begin with smaller deployments, such as 2-GPU or 4-GPU configurations, and scale incrementally. In this context, our 4-GPU and 8-GPU server systems, including the R680F7, align precisely with market demand by offering modular, scalable performance.

Similarly, the education sector presents a strong use case. Universities, research institutions, and faculty members actively engage in AI research and teaching workloads; consequently, they require flexible and scalable infrastructure. Our 2U, dual-GPU servers and 4U, 8-GPU systems address these needs effectively by balancing performance with adaptability.

In parallel, our DGX-class solution, referred to as the Altos GB10, targets customers deploying AI agents and domain-specific AI workloads across verticals such as manufacturing and healthcare. To strengthen this ecosystem, we are collaborating with local ISP partners that bring specialized software capabilities. We complement their solutions with our hardware and a robust resource management software platform; as a result, customers can seamlessly integrate generative AI and agent-based applications on top of our infrastructure. This integrated approach ensures optimal performance, improved efficiency, and faster deployment of AI solutions across industries.

Q: How do you assess the current state of India’s AI landscape, and how resilient is it?

A: Multiple forecasts point to strong momentum; however, the most recent estimate I have seen from a market research firm places this year’s revenue at approximately $17 billion. This figure already signals a substantial opportunity.

At the same time, the long-term trajectory appears even more compelling. During the NVIDIA GTC last week, Jensen Huang stated in his keynote that global AI infrastructure could scale to a $1 trillion market within the next two years. When you compare $17 billion to $1 trillion, the current market represents only about 1.7 percent of that potential; therefore, India still has significant headroom for growth.

Moreover, the technological shift now underway will further accelerate this expansion. The industry is moving beyond traditional generative AI toward agentic AI systems. Applications such as OpenClaw illustrate this transition, where autonomous agents perform complex, multi-step tasks. As these agentic workloads scale, they generate tokens at a far higher rate than conventional generative models; consequently, they place exponentially greater demands on compute infrastructure.

As a result, organizations will need to substantially expand their computing capacity to support this new class of AI workloads. This shift will not only intensify demand for high-performance infrastructure but also redefine how enterprises and governments plan their AI investments.

Q: In the context of ‘Make in India’ for AI, could you elaborate on your collaborations with the Government of India and domestic companies?

A: We work closely with local Electronic Manufacturing Services (EMS) partners while also sourcing critical components from within India. At the outset, we focus on rigorous qualification processes; specifically, we ensure that our proprietary system designs can be manufactured locally with complete accuracy, consistency, and reliability.

In parallel, we validate the quality and compatibility of components sourced from the Indian supply chain. To achieve this, we conduct extensive system-level testing, including compatibility checks, integration validation, and performance benchmarking.

Through this disciplined approach, we ensure that every ‘Make in India’ server meets the same stringent quality standards as those produced at our headquarters in Taiwan.

Q: How significant is AI in shaping the future for individuals and organizations, and how is it expected to transform the broader internet and IT landscape?

A: Over the past three decades, the internet has connected billions of devices and people; as a result, it has enabled entire industries such as mobile communications and e-commerce while catalyzing countless new applications. Building on that precedent, AI is poised to replicate, and potentially exceed, that impact. In fact, the only real constraint on its evolution is the limit of human imagination.

At the same time, this transformation is still in its earliest phase. The modern AI wave effectively began with the launch of ChatGPT in 2022; therefore, the ecosystem is barely three years old. Yet, within this short span, the industry has already introduced a surge of new ideas and paradigms. Platforms such as OpenClaw exemplify how rapidly innovation is advancing, particularly in the domain of agentic AI.

Moreover, the pace of this evolution far exceeds that of the early internet era. Consequently, the next three to five years will likely bring profound transformation, marked by accelerated innovation, disruptive applications, and significant new opportunities across sectors.